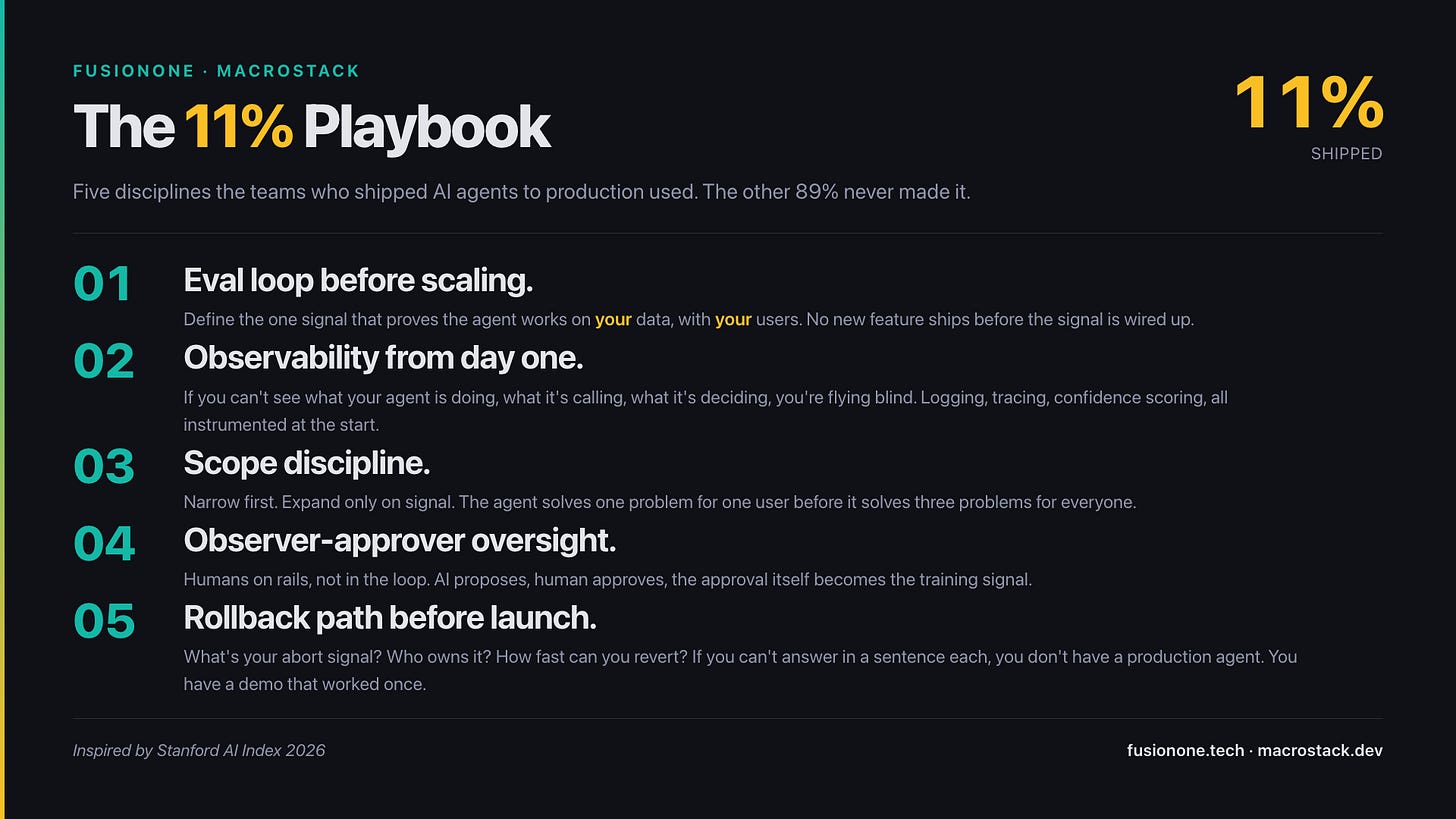

89% of Enterprise AI Agents Never Reach Production. Here's the 11% Playbook.

Stanford just released the number. The four big AI labs just shipped runtimes to fix it. Better infrastructure isn't the answer.

Stanford’s AI Index dropped this month. One number stopped me: 89% of enterprise AI agents never reach production.

Same two weeks, the major labs each shipped a runtime to fix it. Anthropic launched Managed Agents on April 8. Google rolled out Gemini Enterprise Agent Platform. Snowflake shipped Cortex agents. OpenAI announced Workspace Agents. YC’s W26 demo day was 88% AI-first, with nearly one in three startups building autonomous agents. Everyone is racing to fix the 89%.

You’ve been watching this from the inside. The decks look good. The demos look good. The contracts are signed. And somewhere between the second sprint and the staging environment, the project quietly stops shipping.

Here’s the thing: better runtimes won’t save you. The 89% isn’t a model problem. It’s not an infrastructure problem. It’s a discipline problem masquerading as an AI problem.

The Failure Isn’t the Model

Claude Opus 4.7 hit 87.6% on SWE-bench Verified. OSWorld task-success scores went from 12% to 66.3% in a year. The models are fine. The harnesses are fine. What fails is everything around the agent: there’s no eval loop, no observability, no scope discipline, no rollback path. The agent works in the demo, then drifts in production, and nobody can see what changed.

I’ve watched this from both sides.

A few years back, my team was working on a supply chain AI. Three engineers, more than a month, and we never fully shipped it. Solid team, real funding, the model behaved. We just didn’t have the discipline around it. We were always one demo away from “ready,” and one production behavior away from a rollback we couldn’t actually trigger.

A year later, solo, I shipped the same domain in two to three days. Same kind of supply chain problem. Different outcome. The variable wasn’t the AI. It was the architecture around the AI.

What the 11% Did Differently

The first thing I built into the FusionOne agent was the eval architecture, not the agent. The pattern: AI proposes, human approves, signal compounds, scope expands only on signal. AI does most of the thing. The user is the observer and the approver. That’s not a slogan. That’s an eval loop drawn in production from day one. When the agent surfaces a quotation worth re-quoting, a human accepts or rejects. Every accept-reject is a labeled signal. The scope only grew when the signal said it should.

The second lesson came from Toshi. We’ve been through three pivots in six months. V1 was AI-powered journaling. Adoption hit a wall because journaling needs behavior change people aren’t willing to make. V2 reframed around the home as a collaboration space. The frame was too narrow. V3 is the AI calendar we ship today, currently live, around 50 active users, 80-90% built with Claude Code. Each pivot was a real eval cycle on the product, not the model. Scope discipline isn’t a planning move, it’s an eval loop you run on yourself.

The 89% don’t do this. They build the agent, scale the infrastructure, and hope the demo holds.

The 11% Playbook

I’m not claiming these are the only five disciplines. They’re the five I keep coming back to. The ones the teams who shipped agents to production actually got right:

Eval loop before scaling. Define the one signal that proves the agent works on your data, with your users. No new feature ships before the signal is wired up.

Observability built in from day one. If you can’t see what your agent is doing, what it’s calling, what it’s deciding, you’re flying blind. Logging, tracing, confidence scoring, all instrumented at the start, not after the first incident.

Scope discipline. Narrow first, expand only on signal. The agent solves one problem for one user before it solves three problems for everyone. Toshi’s three pivots are this rule made visible.

Observer-approver oversight. Humans on rails, not in the loop. AI proposes, human approves, the approval itself is the training signal. This pattern shipped FusionOne in days, not months.

Rollback path before launch. What’s your abort signal? Who owns it? How fast can you revert? If you can’t answer those three questions in a sentence each, you don’t have a production agent. You have a demo that worked once.

The Question Worth Asking

Pull up your last AI agent project. On the back of a napkin, can you write the eval signal that says it’s working, and the rollback trigger that says it isn’t?

If you can, congratulations. You’re already running the 11% Playbook.

If you can’t, that’s why it stalled. And no amount of Managed Agents, Gemini Enterprise, or Cortex is going to change that, because you’d be installing a runtime to fix a discipline problem.

The 89% will keep buying infrastructure. The 11% will keep shipping agents. Which side is your team building for?