93% of Engineers Use AI. Productivity Went Up 10%. Something Doesn't Add Up.

Everyone's diagnosing why AI only gives 10%. Nobody's explaining why the top quartile gets 2x.

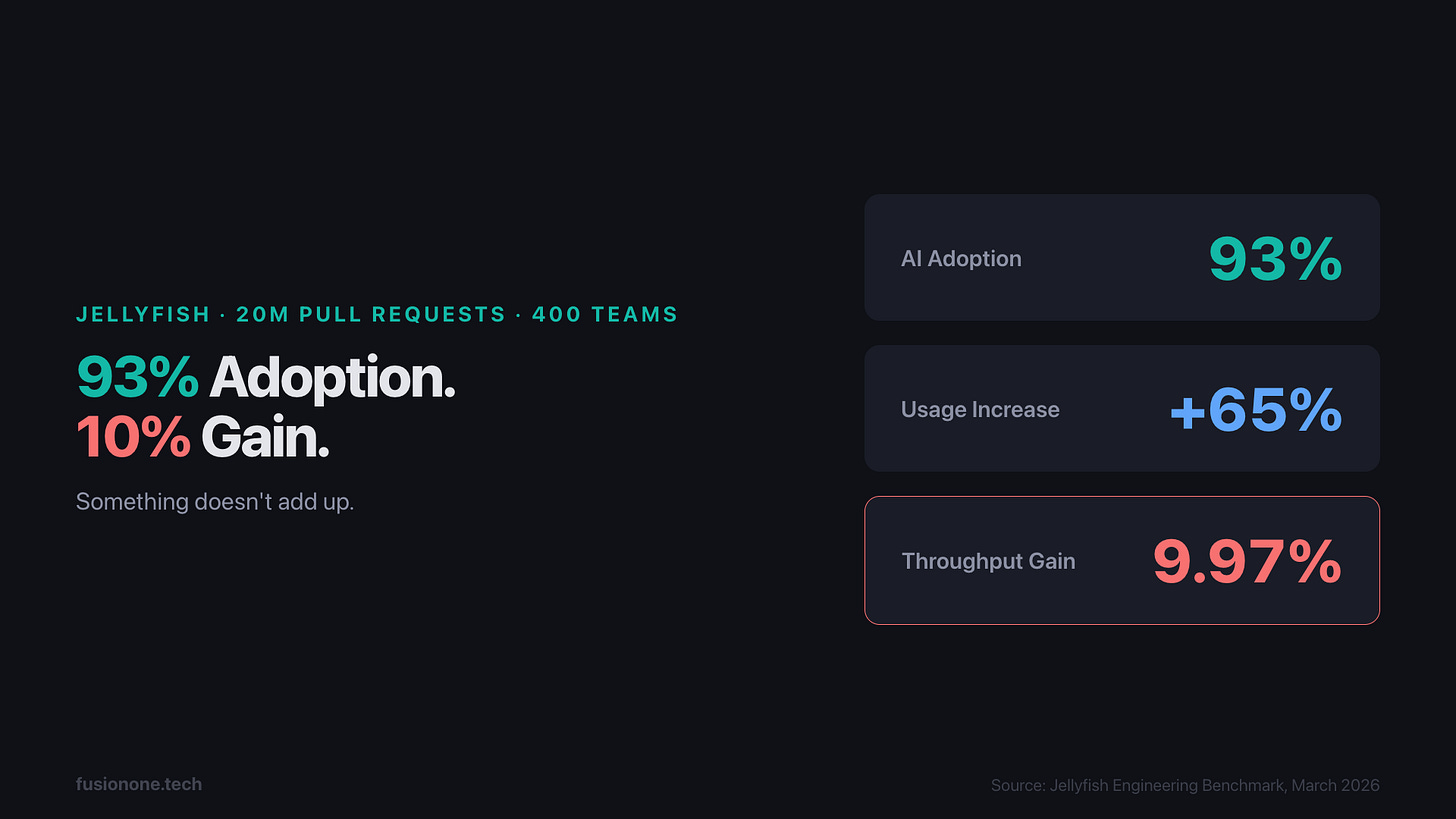

Jellyfish just analyzed 20 million pull requests across 400 engineering teams. AI tool usage is up 65%. PR throughput? Up 9.97%.

That’s not a rounding error. That’s a signal.

The industry spent two years telling you AI would change everything. Billions in tool subscriptions. Every IDE has a copilot now. Every team has a ChatGPT tab open somewhere. And the aggregate result across 20 million PRs is... ten percent.

But here’s what makes the data interesting. The top-quartile AI adopters in the same study see 2x throughput. Same tools. Same models. Wildly different outcomes. So the question isn’t whether AI works. It clearly does, for some. The question is why most engineers aren’t getting there.

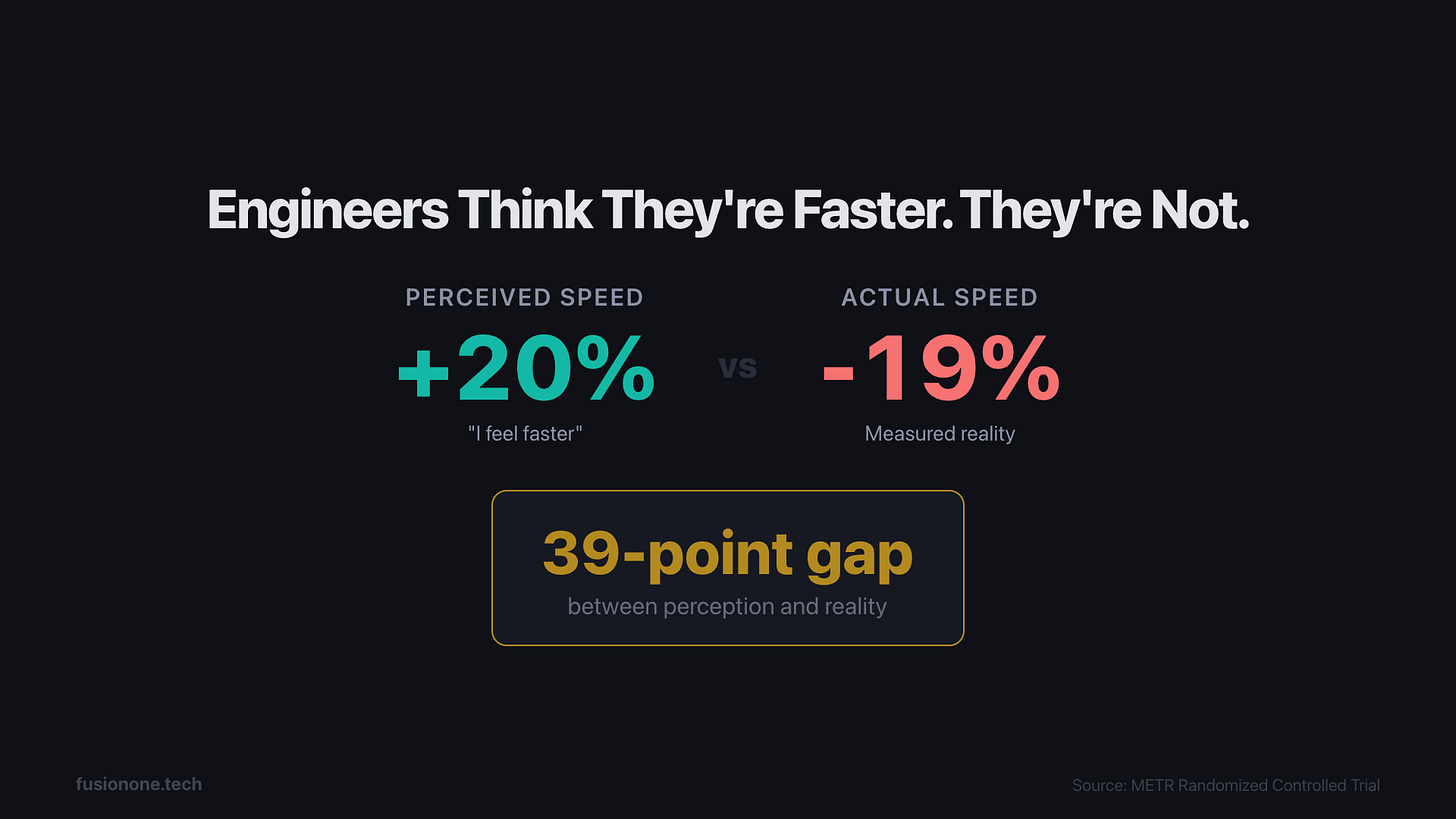

The 39-Point Perception Gap

Here’s the uncomfortable part. A controlled study gave experienced open-source developers real tasks with and without AI assistance. The developers using AI were 19% slower. But when asked? They said they were 20% faster.

That’s a 39-point gap between perception and reality. It might be the most important number in this entire conversation.

It explains why nobody’s panicking. You feel faster. The autocomplete fires. The chat gives you answers. Code appears on screen. You’re moving. But the Faros AI data across 10,000 developers tells a different story: teams with high AI adoption merged 98% more pull requests, but review time increased 91% and bugs went up 9%. DORA metrics? Flat. More PRs, bigger PRs, slower reviews, more bugs, same throughput.

More code is not more output.

A 2026 Tool With a 2023 Workflow

Most engineers bolted AI onto an unchanged workflow. That’s the pattern behind the 10%.

Using AI as autocomplete, tab-completing lines of code slightly faster. Still reading every line. Still the bottleneck. Saved keystrokes, not hours. Copy-pasting code into a chat window and asking “what’s wrong with this?” No codebase context. No architecture awareness. The AI gives generic answers because it received a generic question.

And the biggest one: prompting without structure. No project conventions. No architectural constraints. No patterns to follow. It’s like handing a contractor a blank sheet of paper and expecting production-quality work on day one. You wouldn’t do that to a junior developer. But most engineers do it to AI every single session.

Gergely Orosz wrote about this two days ago. Uber built twelve internal systems just to deal with the quality problems from AI-generated code. When the tool generates more code without the right context, you don’t get productivity. You get cleanup.

The problem isn’t that AI doesn’t work. It’s that most teams are using a 2026 tool with a 2023 workflow.

What the Top Quartile Does Differently

The Jellyfish study has another finding that most coverage is ignoring: centralized codebases with structured context see 4x productivity gains. Distributed architectures with scattered context? Near zero.

Context is the multiplier. And the engineers getting 2x aren’t using different tools. They’re operating differently.

They treat context as infrastructure. Not a prompt you type each time, but an architecture document the AI reads every session. Project conventions, patterns, constraints, all front-loaded. The AI starts every conversation already knowing how the codebase works. Most engineers skip this entirely and wonder why the output feels generic.

They decompose before they delegate. Instead of handing AI one big task and getting one mediocre result, they break work into focused, parallelizable units. Four agents, four isolated branches, each with a clear scope. The decision of what to parallelize matters more than the parallelism itself. One vague task produces vague output. Four focused tasks produce four solid results.

They shift from coder to architect. They review outcomes, not keystrokes. They validate that the system behaves correctly instead of reading every line the AI wrote. This is the hardest mental shift for engineers. We built our identities around writing code. The top quartile is building their identity around designing systems that write code.

And they compound their workflows. Every solved problem becomes a reusable pattern. Templates, skills, conventions that the AI can reference next time. Most engineers start from zero each session. Top performers build institutional knowledge into their AI setup. The AI doesn’t get better because the model improves. It gets better because the context improves.

Anthropic’s own research found that 27% of AI-assisted work consists of tasks that wouldn’t have been done otherwise. The top quartile isn’t just doing old work faster. They’re doing new work that wasn’t possible before.

One Data Point

I’ve been operating this way for over a year now. 5.1 billion tokens processed in two months. 587 sessions. Three companies running on this operating model. I’ve written about the specific workflow before, the parallel agents, the skills system, the compounding context. If you want the tactical depth, it’s there.

This isn’t a pitch for my setup. It’s a recognition that the setup matters more than the subscription.

And it’s not perfect. Agents go in circles on complex architectural decisions. Long sessions drift as context degrades. Sometimes I spend more time correcting output than it would’ve taken to write it myself. Customer conversations, product strategy, the ground work, that’s still entirely human. Maybe always will be.

But the difference between my results and the industry average isn’t talent. It’s the operating model.

The AI Operating Model Gap

Here’s the takeaway. AI doesn’t make you faster. Your operating model makes AI faster.

Most engineers upgraded the tool and kept the workflow. The top quartile upgraded the workflow.

The 93% adoption rate isn’t the story. The 10% gain isn’t the story. The gap between 10% and 2x is the story. And that gap has a name: the AI Operating Model Gap.

If you’re getting 10% from AI right now, you’re not doing it wrong. You’re just running new hardware on old software.

Next week, I’m writing the operating model blueprint, how to audit your current AI workflow and upgrade it step by step. This piece was the why. That one’s the playbook.